Why does it seem like despite the ever-evolving technology and the billions of dollars being spent on cybersecurity, that the attackers are winning? Well, in two words: they are.

Despite our best efforts to disrupt cyber attacks, it’s the current paradigm that isn’t working, not just the technology we deploy. Below, I discuss the current “defender’s paradigm” - the predominant thought model that still informs the defensive behavior and security posture of large parts of the cybersecurity community - and examine how we got here and what we can do about it.

The current defender’s paradigm is pretty simple: it’s the realization that the cyberwar is going to be fought on your network and preparing accordingly. The most valuable networks have thousands of endpoints, ever-changing rosters of users, and enclaves of incredibly valuable information distributed worldwide. As such, most organizations, either through concerted planning or trial and error, generally follow a six-step defensive model as outlined below.

Given that many organizations see cybersecurity problems through the same lens, they tend to deploy the same technologies to thwart attackers. Bottom line: your network isn’t unique.

A typical network typically includes VPNs, firewalls/SWG’s, SSL break and inspect infrastructure, Identity and Access Management (IAM), AV scanning, sandboxing, a Security Information Event Management (SIEM) to make sense of all the data the other technologies are creating, as well as a host of other tools.

However, almost all of these technologies are reactive. By the time something bad hits your tech stack, it’s too late. The attacker has the advantage.

Moreover, once an attacker gets into your network, the exploitation part is pretty easy considering most organizations use the same COTS technologies to run their businesses. Attackers spend the majority of their time getting in--exfiltrating data or breaking things once it is relatively easy.

Pre-intrusion planning is the key. It’s the only time a defender has a decided advantage over an attacker in the current paradigm. Defenders need to do all they can to reduce the attack surface, with patching currently the most critical aspect of doing so.

Sadly, most organizations have mixed results at patching in a timely, effective manner. Studies show it typically takes between 100-120 days between a patch release and the average organization finally getting around to pushing the update. For larger organizations running a more complex tech stack, this can take even longer.

Monitoring user accounts and traffic are generally considered the next most important thing for organizations to do proactively. These efforts fall into what many teams call the “last mile” problem. Your users pose a massive risk to your organization, but productivity and the bottom line trump security, so you make exceptions.

The monitoring step is also the most time consuming and expensive. Logs are disparate, and perimeters are no longer finite or static. Most organizations don’t even have a full accounting for where all this data resides, let alone the workforce and technology to effectively analyze it. This creates the never-ending “do-loop” in which IT teams are only responding to the most immediate incidents, while more sophisticated threats sneak through.

Unlike traditional warfare where the home team has the advantage, cybersecurity defenders often do not have intricate knowledge or control of their own environment. Thus, the scales tip in favor of the adversary.

This problem is pretty unique to the cyber domain. It’s hard to invade a foreign country (e.g., Afghanistan) where the locals know the terrain and have time to prepare the battlespace. It’s much simpler to attack a network where the defenders are reactionary and don’t even know where everything is.

Not exactly. I’m asking you to take a second look at the current defender’s paradigm. Our collective plan has been to pick the signal from the noise or to find the needle in the haystack. We aren’t good at either; hence cyber attacks and security spending are up and to the right. Spending more money, and adding more and more complex technology, is proving futile.

The technologies I outlined above are all trying to solve AROUND the problem of users going online to be productive members of the workforce. What if we took a second look at the problem we are trying to solve?

The browser has become the primary tool for organizations to operate in a connected and competitive environment. Yet instead of enabling organizations to leverage the power of the web, it has become synonymous with loss of control, governance and security. We spend billions trying to solve for the inherent security issues of supposedly “free” browsers.

This approach leaves the underlying problem unsolved: The locally installed browser, at its core, brings unknown, arbitrary code into your network and offers no control over who does what and when.

At Authentic8, we believe the paradigm needs to shift from trying to solve problems AROUND the browser to finally solving the browser issues within the browser itself.

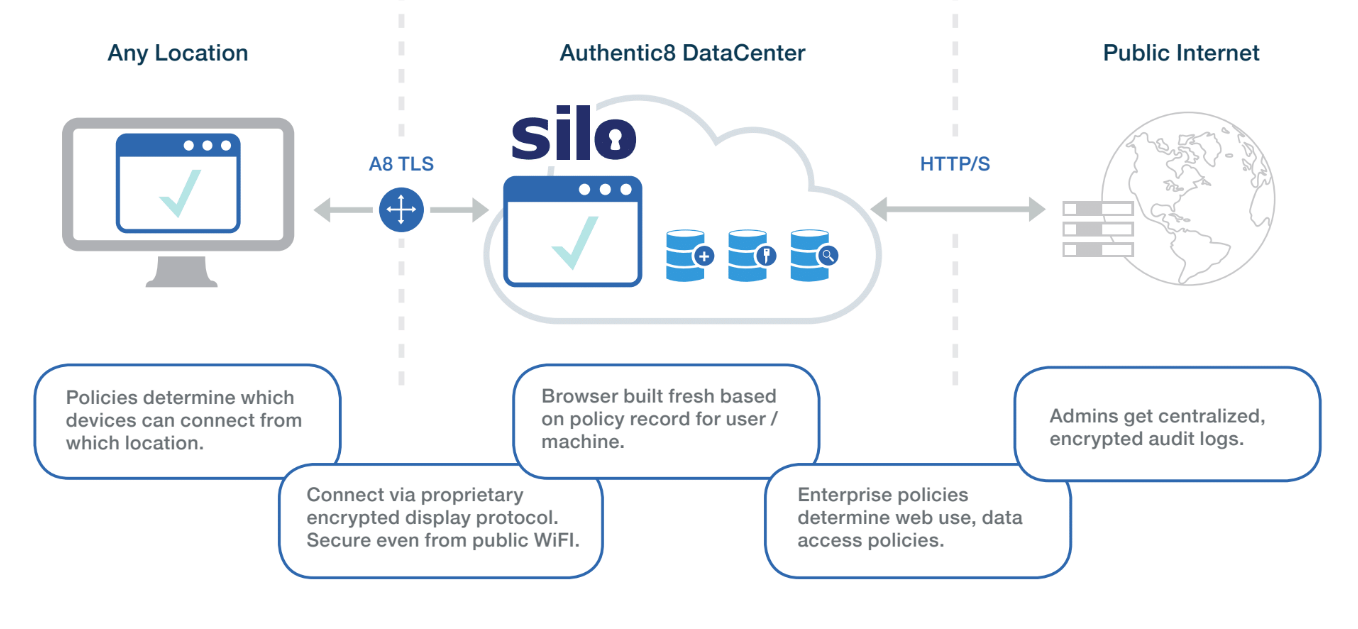

By cracking the browser open, putting it in the cloud, streaming benign encrypted pixels to the endpoint and adding robust administrative controls, organizations can change the current paradigm without affecting productivity or competitive advantage.

Defenders who include a cloud browser during the Preparation phase of network planning have a much more streamlined tech stack and significantly reduced attack surface. The fight no longer takes place on your network. Click on a bad link? No problem, that drive-by-download infects the disposable browser in the cloud, not a computer running on your network.

The early phases of the attackers model; determining target, making entry, pivoting and gaining privileges are what take up the bulk of the attackers time. A cloud browser effectively disrupts this phase and creates a choke point in the attackers planning. Think of the Battle of Thermopylae during which a vastly outnumbered Greek force held off a numerically and technologically superior force of Persians for a significant period.

If I know where I’m vulnerable (patching, logging and letting my users be productive online), a small force (my IT team) has a much higher chance at holding off a technologically or numerically superior adversary. By rendering all web content in the cloud, you’re effectively starving a threat actor of options to invade your network and goading them to attack areas you can more easily defend. The economics of cyber attacks begin to shift in favor of the defender.

Non-obvious attack vectors (think SMB ports and the like) become the primary attack vectors and allow defenders to be narrow in focus and more proactive in approach. Pattern matching, IOC ID and behavioral indicators all become easier to uncover given the smaller attack surface. The “blinking lights” stop blinking as much and your IT teams gain leverage.

Oh, and did I mention you can significantly reduce your technology spend by making many of the parts to your existing tech stack redundant? To find out more, visit Silo Web Isolation Platform.